First of all, thanks to and give @definitelynotadog some love as I have use his workflow for references when building the Dual KSampler. https://civitai.com/models/1622023?modelVersionId=1835720

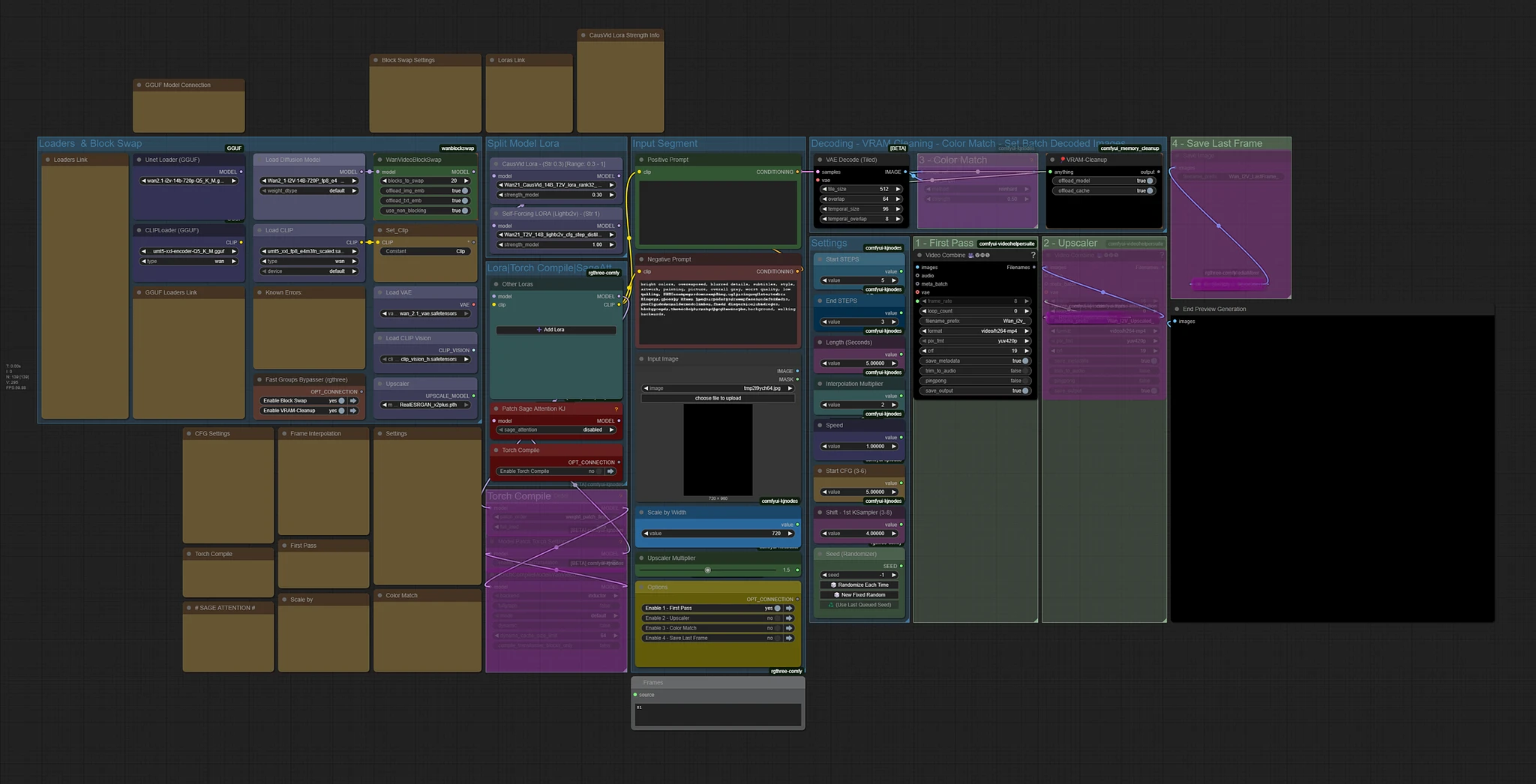

Wan 2.1 i2v (Native) Self-Forcing(lightx2v) Dual Sampling for Better Motions

The i2v workflow is build with the intentions in mind:

Build for the use of Self-Forcing(lightx2v) Lora (but not limited to)

For learning and exploration

Experimental purposes

Modular Sections (add, build upon, swap or extract part of the workflow)

-

Exploded View - to see all connections

This workflow includes:

Block Swap for memory management

Sage Attention (switch on if you have it installed)

Torch Compile

Stack Lora Loader

Scale by Width for Image Dimension Adjustment

Video Speed Control

Frame Interpolation for Smoothing

Auto Calculation for Numbers of Frames and Frame Rates in accordance to the inputs of video length, speed and interpolation multiplier

Save Last Frame

Previews for both First KSampler Latent and End Video Images

Dual Sampling using 2 KSamplers

VRAM - Clean-up

Upscaler (up to 2x)

-

Template for external Frame Interpolation

Videos posted above are without speed adjustment.

Includes Embedded Workflow - except the joint video comparison.

Links to models/lora files in workflow.

Start CFG may be different from each video depends on the other loras I use.

Models to use:

The workflow can use either wan2.1 14B 480p or 720p. (i2v).

720p model and higher resolution images are recommended as it gives better quality. Especially eyes and teeth during motions.

Examples:

5 first steps / 3 last step / 81 frames

480p model - 480x640 Image

480p model - 480x640 Image

480p model - 720x960 Image

480p model - 720x960 Image

720p model - 480x640 Image

720p model - 480x640 Image

720p model - 720x960 Image

720p model - 720x960 Image

Generation speed with my 3090 TI 24GB with Sage Attention no GGUF - 5 first steps & 3 last steps (total 8 steps) , 81 frames and 4x frame interpolation multiplier:

720 x 960 Image : ~750 secs 12-13 mins est.

480 x 640 Image : ~350 secs 5-6 mins est.

Note:

Some Loras may distort faces or the character. Either reduce the lora strength or use an alternative lora.

Sometimes you may need to generate a few times to have better motion seed. Be patient.

-

I did not test every loras, so you will need to test and figure it out yourself.

(480p/720p Model, Image Dimension, Lora Strength, Start CFG)

-

*If you find the other loras you are using with this workflow are too aggressive. (too much motion, color change, sudden exposure), lower down the Start CFG(4-2). Otherwise leave it at 5.

Some videos I posted above used lower CFG because the other loras are too aggressive with high CFG level.

Drafting for motion with other Loras:

Use smaller image dimension for faster generation to see if the lora you use have any motion.

-

Once satisfied with lora and prompt proceed to use desire image dimension.

Other tips:

You can also clean up distortion/blur by using other V2V workflow.

Like this one:

https://civitai.com/models/1714513/video-upscale-or-enhancer-using-wan-fusionx-ingredients

and/or use face swap to clear face distortions.

Dual KSampler

Recommended Steps:

5 start steps / 3 end steps (I used the most for testing)

-

4 start steps / 2 end steps

Self-Forcing(lightx2v) may sometimes hinder, slow down or produce less motion for some loras.

In order to get more motion for some loras, you need a higher CFG level of more than 1, but when using Self-Forcing(lightx2v), you need to set the CFG to 1.

So this is when Dual KSampler is utilize.

-

The 1st KSampler uses high CFG level of 3-5 to create better "motion latent" along with Causvid Lora to increase more motion with lesser step generation.

-

The 2nd KSampler uses low CFG of 1 for finishing the video with 2 -3 steps using Self-Forcing(lightx2v) lora for speed generation. More steps count will make Self-Forcing(lightx2v) lora to influence the video more and cause lesser motion again.

In order to pass "motion latent info" to the 2nd sampler, the 1st step count has to be more than half of the total steps.

Examples:

5 first steps / 3 last steps / 8 total steps

4 first steps / 2 last steps / 6 total steps

or even - 3 first steps / 1 last steps / 4 total steps (If you feel like experimenting)

When it is configure in this way, you can see the images start to form in the latent preview:

That is when it can be pass to the 2nd KSampler with "motion latent info" to finish it off without heavily influencing it in low steps.

That is when it can be pass to the 2nd KSampler with "motion latent info" to finish it off without heavily influencing it in low steps.

(If the 1st steps count is half or less than half of the total steps, you will see a very noisy image that does not resemble anything.)

Basically a 6-8 steps generation splits into 2 KSampler.

The 2nd KSampler continue the generation process from where the 1st KSampler left off.

(Using 2 normal samplers will not produce the same results as the 2nd sampler will not know at which step to continue from. It will take the product of the 1st KSampler, ignore what it has produce and start from step 0.)

Unfortunately, KSampler with Start End Step is only available for Native and not WanWrapper.

Tooooo many GET and SET nodes....

You can utilize the Nodes Map Search (Shift+m) function.

In your Comfyui Interface panel. Usually on the left. Look for an icon with 1 small square on top and 3 small squares below it. It's call Nodes Map.

Let say you see a "Set_FrameNum" node.

And you want to know where the "Get_FrameNum" is.

Enter in the search bar:

Get_FramN....--! Case Sensitive !--

And you will see it filtered.

Double click on that and it will bring you to the node.

Likewise for Get nodes:

Example for "Set_FrameNum"

Search:

Get_FrameNum--! Case Sensitive !--

Filtered.

Double click.

Custom Nodes

ComfyUI-Custom-Scripts

rgthree-comfy

ComfyUI-KJNodes

ComfyUI-Frame-Interpolation

ComfyUI-mxToolkit

ComfyUI-MemoryCleanup

ComfyUI-wanBlockswap

-

MediaMixer

After notes:

You may build upon, use part of, edit, merge and publish without crediting me.

The reason why I don't use GGUF is because it keeps bricking my ComgfyUI every time I tried installing it.

I do not have more in-depth level of understanding beyond this point.

Popularity

Info

Version 🟥v1.0 - Old: 1 File

About this version: 🟥v1.0 - Old

First Version

17 Versions

Go ahead and upload yours!